Multi-modal topic inferencing from videos

Any organization that has a large media archive struggles with the same challenge – how can we transform our media archives into business value? Media content management is hard, and so is content discovery at scale. Content categorization by topics is an intuitive approach that makes it easier for people to search for the content they need. However, content categorization is usually deductive and doesn’t necessarily appear explicitly in the video. For example, content that is focused on the topic of ‘healthcare’ may not actually have the word ‘healthcare’ presented in it, which makes the categorization an even harder problem to solve. Many organizations turn to tagging their content manually, which is expensive, time-consuming, error-prone, requires periodic curation, and is not scalable.

In order to make this process much more consistent and effective, cost and timewise, we introduce Multi-modal topic inferencing in Video Indexer. This new capability can intuitively index media content using a cross-channel model to automatically infer topics. The model does so by projecting the video concepts onto three different ontologies – IPTC, Wikipedia, and the Video Indexer hierarchical topic ontology (see more information below). The model uses transcription (spoken words), OCR content (visual text), and celebrities recognized in the video using the Video Indexer facial recognition model. The three signals together capture the video concepts from different angles much like we do when we watch a video.

Topics vs. Keywords

Video Indexer’s legacy Keyword Extraction model highlights the significant terms in the transcript and the OCR texts. Its added value comes from the unsupervised nature of the algorithm and its invariance to the spoken language and the jargon. The main difference between the existing keyword extraction model and the topics inference model is that the keywords are explicitly mentioned terms whereas the topics are inferred, for example, higher-level implicit concepts by using a knowledge graph to cluster similar detected concepts together.

Example

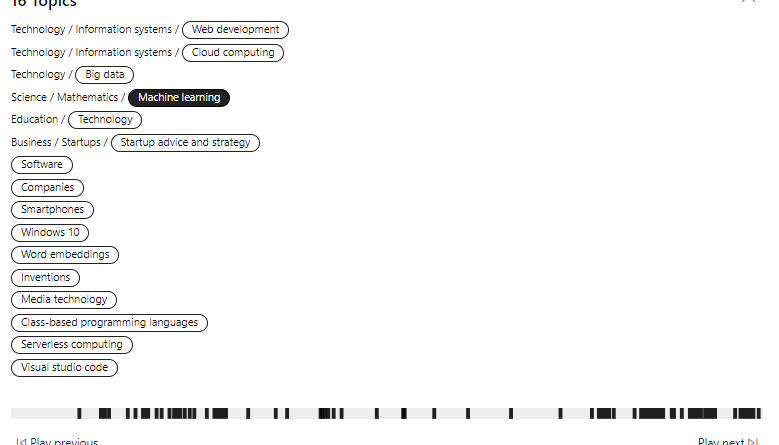

Let’s look at the opening keynote of the Microsoft Build 2018 developers’ conference which presented numerous products and features as well as the vision of Microsoft for the near future. The main theme of Microsoft leadership was how AI and ML are infused into the cloud and edge. The video is over three and a half hours long which would take a while to manually label. It was indexed by Video Indexer and yielded the following topics: Technology, Web Development, Word Embeddings, Serverless Computing, Startup Advice and Strategy, Machine Learning, Big Data, Cloud Computing, Visual Studio Code, Software, Companies, Smartphones, Windows 10, Inventions, and Media Technology.

The experience

Let’s continue with the Build keynote example. The topics are available both on the Video Indexer portal on the right as shown in Figure 2, as well as through the API using the Insights JSON like in Figure 3 where both IPTC topics like “Science and Technology” and Wikipedia categories topics like “Software” appear side by side.

Under the hood

The artificial intelligence models applied under the hood in Video Indexer are illustrated in Figure 4. The diagram represents the analysis of a media file from its upload, shown on the left-hand side, to the insights on the far-right hand side. The bottom channel applies multiple computer vision algorithms, OCR, Face Recognition. Above, you’ll find the audio channel starting from fundamental algorithms such as language identification and speech-to-text, higher level models like keyword extraction, and topic inference, which are based on natural language processing algorithms. This is a powerful demonstration of how Video Indexer orchestrates multiple AI models in a building block fashion to infer higher level concepts using robust and independent input signals from different sources.

Video Indexer applies two models to extract topics. The first is a deep neural network that scores and ranks the topics directly from the raw text based on a large proprietary dataset. This model maps the transcript in the video with the Video Indexer Ontology and IPTC. The second model applies spectral graph algorithms on the named entities mentioned in the video. The algorithm takes input signals like the Wikipedia IDs of the celebrities recognized in the video, which is structured data with signals like OCR and transcript that are unstructured by nature. To extract the entities mentioned in the text, we use Entity Linking Intelligent Service aka ELIS. ELIS recognizes named entities in free-form text so that from this point on we can use structured data to get the topics. We later build a graph based on the similarity of the entities’ Wikipedia pages and cluster it to capture different concepts within the video. The final phase ranks the Wikipedia categories according to its posterior probability to be a good topic where two examples per cluster are selected. The flow is illustrated in Figure 5.

Ontologies

Wikipedia categories – Categories are tags that could be used as topics. They are well edited, and with 1.7 million categories, the value of this high-resolution ontology is both in its specificity and its graph-like connections with links to articles as well as other categories.

Video Indexer Ontology – The Video Indexer Ontology is a proprietary hierarchical ontology with over 20,000 entries and a maximum depth of three layers.

IPTC – The IPTC ontology is popular among media companies. This hierarchically structured ontology can be explored on IPTC's NewsCode. IPTC topics are provided by Video Indexer per most of the Video Indexer ontology topics from the first level layer of IPTC.

The bottom line

Video Indexer’s topic model empowers media users to categorize their content using an intuitive methodology and optimize their content discovery. Multi-modality is a key ingredient for recognizing high-level concepts in video. Using a supervised deep learning-based model along with an unsupervised Wikipedia knowledge graph, Video Indexer can understand the inner relations within media files, and therefore provide a solution that is accurate, efficient, and less expensive than manual categorization.

If you want to convert your media content into business value, check out Video Indexer. If you’ve indexed videos in the past, we encourage you to re-index your files to experience this exciting new feature.

Have questions or feedback? Using a different media ontology and want it in Video Indexer? We would love to hear from you!

Visit our UserVoice to help us prioritize features, or email VISupport@Microsoft.com with any questions.

Source: Azure Blog Feed