Accelerate real-time big data analytics with Spark connector for Microsoft SQL Databases

Apache Spark is a unified analytics engine for large-scale data processing. Today, you can use the built-in JDBC connector to connect to Azure SQL Database or SQL Server to read or write data from Spark jobs.

The Spark connector for Azure SQL Database and SQL Server enables SQL databases, including Azure SQL Database and SQL Server, to act as input data source or output data sink for Spark jobs. It allows you to utilize real-time transactional data in big data analytics and persist results for adhoc queries or reporting.

Compared to the built-in Spark connector, this connector provides the ability to bulk insert data into SQL databases. It can outperform row-by-row insertion with 10x to 20x faster performance. The Spark connector for Azure SQL Databases and SQL Server also supports Azure Active Directory authentication. It allows you to securely connect to your Azure SQL database from Azure Databricks using your AAD account. The Spark connector also provides similar interfaces with the built-in JDBC connector and is easy to migrate your existing Spark jobs to use this new connector.

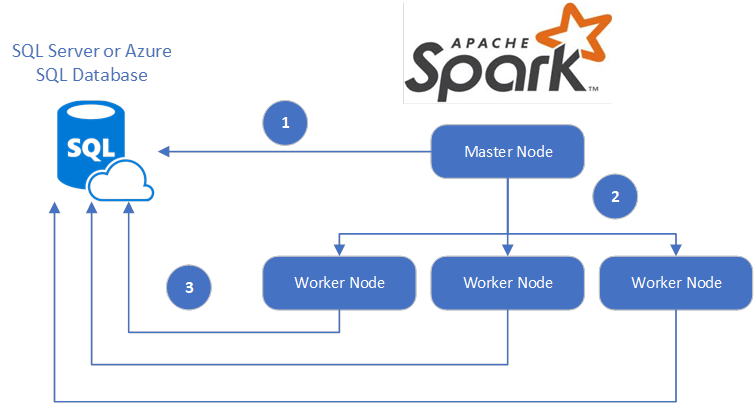

The Spark connector for Azure SQL Database and SQL Server utilizes the Microsoft JDBC Driver for SQL Server to move data between Spark worker nodes and SQL databases:

- The Spark master node connects to SQL Server or Azure SQL Database and loads data from a specific table or using a specific SQL query.

- The Spark master node distributes data to worker nodes for transformation.

- The Worker node connects to SQL Server or Azure SQL Database and writes data to the database. The user can choose to use row-by-row insertion or bulk insert.

To get started, visit the azure-sqldb-spark repository on GitHub. You can also find the Sample Azure Databricks notebooks and Sample scripts in Scala in the same repository. You can also find more details from online documentation.

You might also want to review the Apache Spark SQL, DataFrames, and Datasets Guide and the Azure Databricks documentation to learn more details about Spark and Azure Databricks.

Source: Azure Blog Feed

![clip_image002[5] clip_image002[5]](https://azurecomcdn.azureedge.net/mediahandler/acomblog/media/Default/blog/f66338dc-6d72-449d-be9f-a868429279b6.png)